milvus: [Bug]: Deploy test all failed due to timeout of second test after reinstall or upgrade

Is there an existing issue for this?

- I have searched the existing issues

Environment

- Milvus version:master-20220607-af108b5b

- Deployment mode(standalone or cluster): both

- SDK version(e.g. pymilvus v2.0.0rc2): pymilvus==2.1.0.dev69

- OS(Ubuntu or CentOS):

- CPU/Memory:

- GPU:

- Others:

Current Behavior

all task timeout when running the second test after reinstall or upgrade.

After repeatedly outputting some logs, the proxy has no log output. Then stop the client program manually and you will get the error reported.

[2022/06/08 03:47:28.965 +00:00] [DEBUG] [id.go:140] ["IDAllocator pickCanDoFunc"] [need=1] [total=199911] [remainReqCnt=0]

[2022/06/08 03:47:28.965 +00:00] [DEBUG] [impl.go:760] ["GetCollectionStatistics enqueued"] [traceID=35cd4e2502ddc161] [role=proxy] [MsgID=433759203819257946] [BeginTS=433759203866443796] [EndTS=433759203866443796] [db=] [collection=task_1_BIN_IVF_FLAT]

[2022/06/08 03:47:28.966 +00:00] [DEBUG] [impl.go:793] ["GetCollectionStatistics done"] [traceID=35cd4e2502ddc161] [role=proxy] [MsgID=433759203819257946] [BeginTS=433759203866443796] [EndTS=433759203866443796] [db=] [collection=task_1_BIN_IVF_FLAT]

[2022/06/08 03:47:28.967 +00:00] [DEBUG] [impl.go:361] ["HasCollection received"] [traceID=69e62636d8fad8a2] [role=proxy] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.967 +00:00] [DEBUG] [id.go:140] ["IDAllocator pickCanDoFunc"] [need=1] [total=199910] [remainReqCnt=0]

[2022/06/08 03:47:28.967 +00:00] [DEBUG] [impl.go:392] ["HasCollection enqueued"] [traceID=69e62636d8fad8a2] [role=proxy] [MsgID=433759203819257947] [BeginTS=433759203866443797] [EndTS=433759203866443797] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.967 +00:00] [DEBUG] [impl.go:422] ["HasCollection done"] [traceID=69e62636d8fad8a2] [role=proxy] [MsgID=433759203819257947] [BeginTS=433759203866443797] [EndTS=433759203866443797] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.968 +00:00] [DEBUG] [impl.go:640] ["DescribeCollection received"] [traceID=5e62470ab19e2eb6] [role=proxy] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.969 +00:00] [DEBUG] [id.go:140] ["IDAllocator pickCanDoFunc"] [need=1] [total=199909] [remainReqCnt=0]

[2022/06/08 03:47:28.969 +00:00] [DEBUG] [impl.go:664] ["DescribeCollection enqueued"] [traceID=5e62470ab19e2eb6] [role=proxy] [MsgID=433759203819257948] [BeginTS=433759203866443798] [EndTS=433759203866443798] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.969 +00:00] [DEBUG] [impl.go:697] ["DescribeCollection done"] [traceID=5e62470ab19e2eb6] [role=proxy] [MsgID=433759203819257948] [BeginTS=433759203866443798] [EndTS=433759203866443798] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.971 +00:00] [DEBUG] [impl.go:545] ["ReleaseCollection received"] [traceID=5a7b07d9878ea3f6] [role=proxy] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 03:47:28.971 +00:00] [DEBUG] [id.go:140] ["IDAllocator pickCanDoFunc"] [need=1] [total=199908] [remainReqCnt=0]

[2022/06/08 03:47:28.971 +00:00] [DEBUG] [impl.go:569] ["ReleaseCollection enqueued"] [traceID=5a7b07d9878ea3f6] [role=proxy] [MsgID=433759203819257949] [BeginTS=433759203877191681] [EndTS=433759203877191681] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 04:11:37.056 +00:00] [WARN] [impl.go:580] ["ReleaseCollection failed to WaitToFinish"] [error="proxy TaskCondition context Done"] [traceID=5a7b07d9878ea3f6] [role=proxy] [MsgID=433759203819257949] [BeginTS=433759203877191681] [EndTS=433759203877191681] [db=] [collection=task_1_BIN_FLAT]

[2022/06/08 04:11:37.056 +00:00] [WARN] [client.go:245] ["querycoord ClientBase ReCall grpc first call get error "] [error="err: rpc error: code = Canceled desc = context canceled\n, /go/src/github.com/milvus-io/milvus/internal/util/trace/stack_trace.go:51 github.com/milvus-io/milvus/internal/util/trace.StackTrace\n/go/src/github.com/milvus-io/milvus/internal/util/grpcclient/client.go:244 github.com/milvus-io/milvus/internal/util/grpcclient.(*ClientBase).ReCall\n/go/src/github.com/milvus-io/milvus/internal/distributed/querycoord/client/client.go:183 github.com/milvus-io/milvus/internal/distributed/querycoord/client.(*Client).ReleaseCollection\n/go/src/github.com/milvus-io/milvus/internal/proxy/task.go:2847 github.com/milvus-io/milvus/internal/proxy.(*releaseCollectionTask).Execute\n/go/src/github.com/milvus-io/milvus/internal/proxy/task_scheduler.go:465 github.com/milvus-io/milvus/internal/proxy.(*taskScheduler).processTask\n/go/src/github.com/milvus-io/milvus/internal/proxy/task_scheduler.go:494 github.com/milvus-io/milvus/internal/proxy.(*taskScheduler).definitionLoop\n/usr/local/go/src/runtime/asm_amd64.s:1371 runtime.goexit\n"]

[2022/06/08 04:11:37.057 +00:00] [ERROR] [task_scheduler.go:468] ["Failed to execute task: context canceled"] [traceID=5a7b07d9878ea3f6] [stack="github.com/milvus-io/milvus/internal/proxy.(*taskScheduler).processTask\n\t/go/src/github.com/milvus-io/milvus/internal/proxy/task_scheduler.go:468\ngithub.com/milvus-io/milvus/internal/proxy.(*taskScheduler).definitionLoop\n\t/go/src/github.com/milvus-io/milvus/internal/proxy/task_scheduler.go:494"]

[2022/06/08 04:12:28.458 +00:00] [WARN] [channels_time_ticker.go:95] ["Proxy channelsTimeTickerImpl failed to get ts from tso"] [error="syncTimeStamp Failed:StateCode=Abnormal"]

[2022/06/08 04:12:28.458 +00:00] [WARN] [channels_time_ticker.go:162] [channelsTimeTickerImpl.tickLoop] [error="syncTimeStamp Failed:StateCode=Abnormal"]

[2022/06/08 04:12:28.458 +00:00] [WARN] [proxy.go:291] [sendChannelsTimeTickLoop.UpdateChannelTimeTick] [ErrorCode=UnexpectedError] [Reason="StateCode=Abnormal"]

Expected Behavior

all test cases passed

Steps To Reproduce

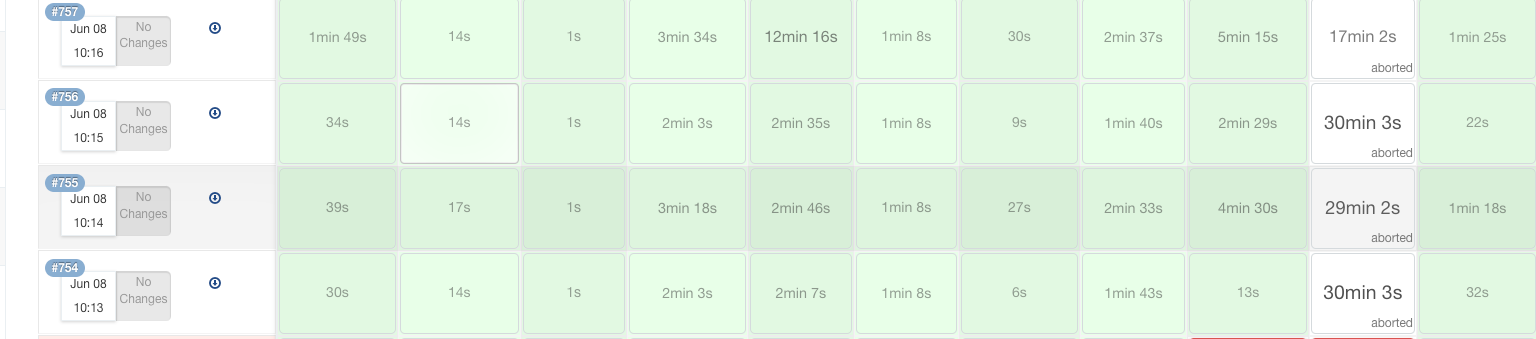

see http://10.100.32.144:8080/blue/organizations/jenkins/deploy_test/detail/deploy_test/761/pipeline

Milvus Log

failed job: http://10.100.32.144:8080/blue/organizations/jenkins/deploy_test/detail/deploy_test/761/pipeline

log: artifacts-cluster-reinstall-761-logs.tar.gz

Anything else?

All failed in Github action and Jenkins

https://github.com/milvus-io/milvus/actions/runs/2457295448/attempts/1

About this issue

- Original URL

- State: closed

- Created 2 years ago

- Comments: 20 (20 by maintainers)

The reason is that metaTable.segID2IndexMeta is incorrectly restored after rootcoord restart, resulting in the indexInfo corresponding to segmentID containing the indexinfo of all segments of the collection