linkerd2: linkerd-controller causing outage in 2.2.0?

Bug Report

What is the issue?

Numerous times this week, sites behind our ingress controllers have been unavailable. Upon digging into it, the following is flooding through the ingress-controller logs

WARN admin={bg=resolver} linkerd2_proxy::control::destination::background::destination_set Destination.Get stream errored for NameAddr { name: "myapp.ns.svc.cluster.local", port: 80 }: Grpc(Status { code: Unknown, message: "grpc-status header missing, mapped from HTTP status code 500" })

and this flooding through one of the linkerd-controller pod (i have 3 running)

linkerd-controller-5fcc8cb6fd-zdrtb linkerd-proxy ERR! proxy={server=in listen=0.0.0.0:4143 remote=10.1.64.5:56422} linkerd2_proxy::proxy::http::router service error: in-flight limit exceeded

If I restart that controller pod, another pod starts flooding the errors. Restarting that second pod restores service

Here’s the status of the linkerd namespace after restarting the 2 pods:

NAME READY STATUS RESTARTS AGE

linkerd-ca-cd9844bdb-26zjc 2/2 Running 0 15d

linkerd-controller-5fcc8cb6fd-2h684 4/4 Running 0 3d10h

linkerd-controller-5fcc8cb6fd-mg95n 4/4 Running 0 95s

linkerd-controller-5fcc8cb6fd-tvh8d 4/4 Running 0 118s

linkerd-grafana-5b9d774cf6-wtwkb 2/2 Running 0 15d

linkerd-prometheus-74ff76f8c4-6dq72 2/2 Running 0 15d

linkerd-proxy-injector-76875f8445-gkb44 2/2 Running 1 15d

linkerd-web-78ff9c6758-mqsws 2/2 Running 0 10d

How can it be reproduced?

Unknown

Logs, error output, etc

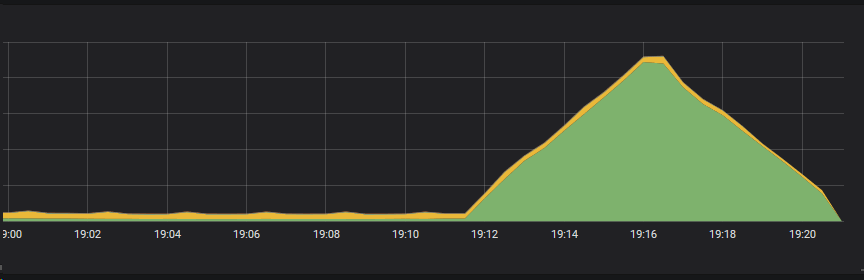

cpu usage of one of the linkerd-controller pods (peak is 0.20)

cpu usage of one of the linkerd-controller pods (peak is 0.20)

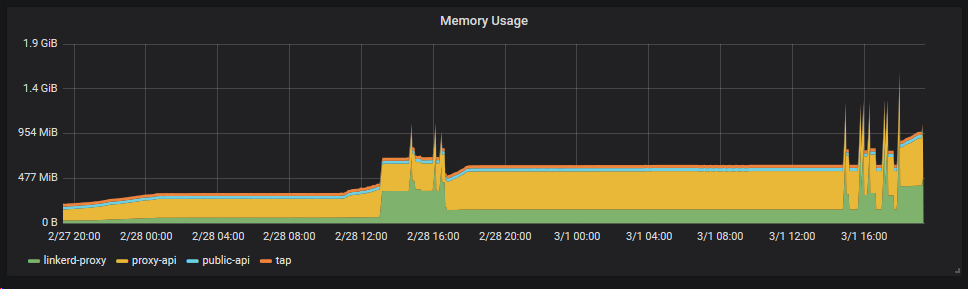

memory usage of the last 2 days (although potentially unimportant and due to issue #2382)

memory usage of the last 2 days (although potentially unimportant and due to issue #2382)

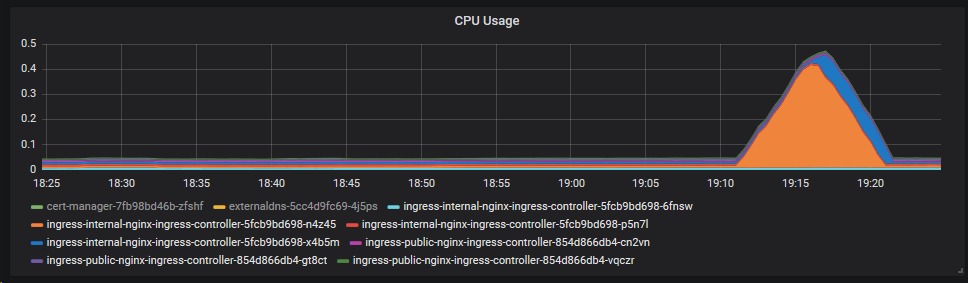

it also caused a cpu spike in 2 of 3 ingress controllers at the time

it also caused a cpu spike in 2 of 3 ingress controllers at the time

linkerd check output

l check

kubernetes-api

--------------

√ can initialize the client

√ can query the Kubernetes API

kubernetes-version

------------------

√ is running the minimum Kubernetes API version

linkerd-existence

-----------------

√ control plane namespace exists

√ controller pod is running

√ can initialize the client

√ can query the control plane API

linkerd-api

-----------

√ control plane pods are ready

√ can query the control plane API

√ [kubernetes] control plane can talk to Kubernetes

√ [prometheus] control plane can talk to Prometheus

linkerd-service-profile

-----------------------

√ no invalid service profiles

linkerd-version

---------------

√ can determine the latest version

‼ cli is up-to-date

is running version 2.2.0 but the latest stable version is 2.2.1

see https://linkerd.io/checks/#l5d-version-cli for hints

control-plane-version

---------------------

‼ control plane is up-to-date

is running version 2.2.0 but the latest stable version is 2.2.1

see https://linkerd.io/checks/#l5d-version-control for hints

√ control plane and cli versions match

Status check results are √

l check --proxy

kubernetes-api

--------------

√ can initialize the client

√ can query the Kubernetes API

kubernetes-version

------------------

√ is running the minimum Kubernetes API version

linkerd-existence

-----------------

√ control plane namespace exists

√ controller pod is running

√ can initialize the client

√ can query the control plane API

linkerd-api

-----------

√ control plane pods are ready

√ can query the control plane API

√ [kubernetes] control plane can talk to Kubernetes

√ [prometheus] control plane can talk to Prometheus

linkerd-service-profile

-----------------------

√ no invalid service profiles

linkerd-version

---------------

√ can determine the latest version

‼ cli is up-to-date

is running version 2.2.0 but the latest stable version is 2.2.1

see https://linkerd.io/checks/#l5d-version-cli for hints

linkerd-data-plane

------------------

√ data plane namespace exists

√ data plane proxies are ready

√ data plane proxy metrics are present in Prometheus

‼ data plane is up-to-date

myapp/cms-76bcf796fd-r2bp9: is running version 2.2.0 but the latest stable version is 2.2.1

see https://linkerd.io/checks/#l5d-data-plane-version for hints

√ data plane and cli versions match

Status check results are √

Environment

- Kubernetes Version: 1.13.3

- Cluster Environment: Azure - AKS-Engine 0.31.0

- Host OS:

- Linkerd version: 2.2.0

Possible solution

while true; do k -n linkerd delete pod -l linkerd.io/control-plane-component=controller; sleep 1h; done

Additional context

About this issue

- Original URL

- State: closed

- Created 5 years ago

- Comments: 25 (24 by maintainers)

@jon-walton I’m not convinced they’re the same underlying issue; that error message could have a number of causes. I just thought it was worth trying to compare the reports to see if there was anything in common