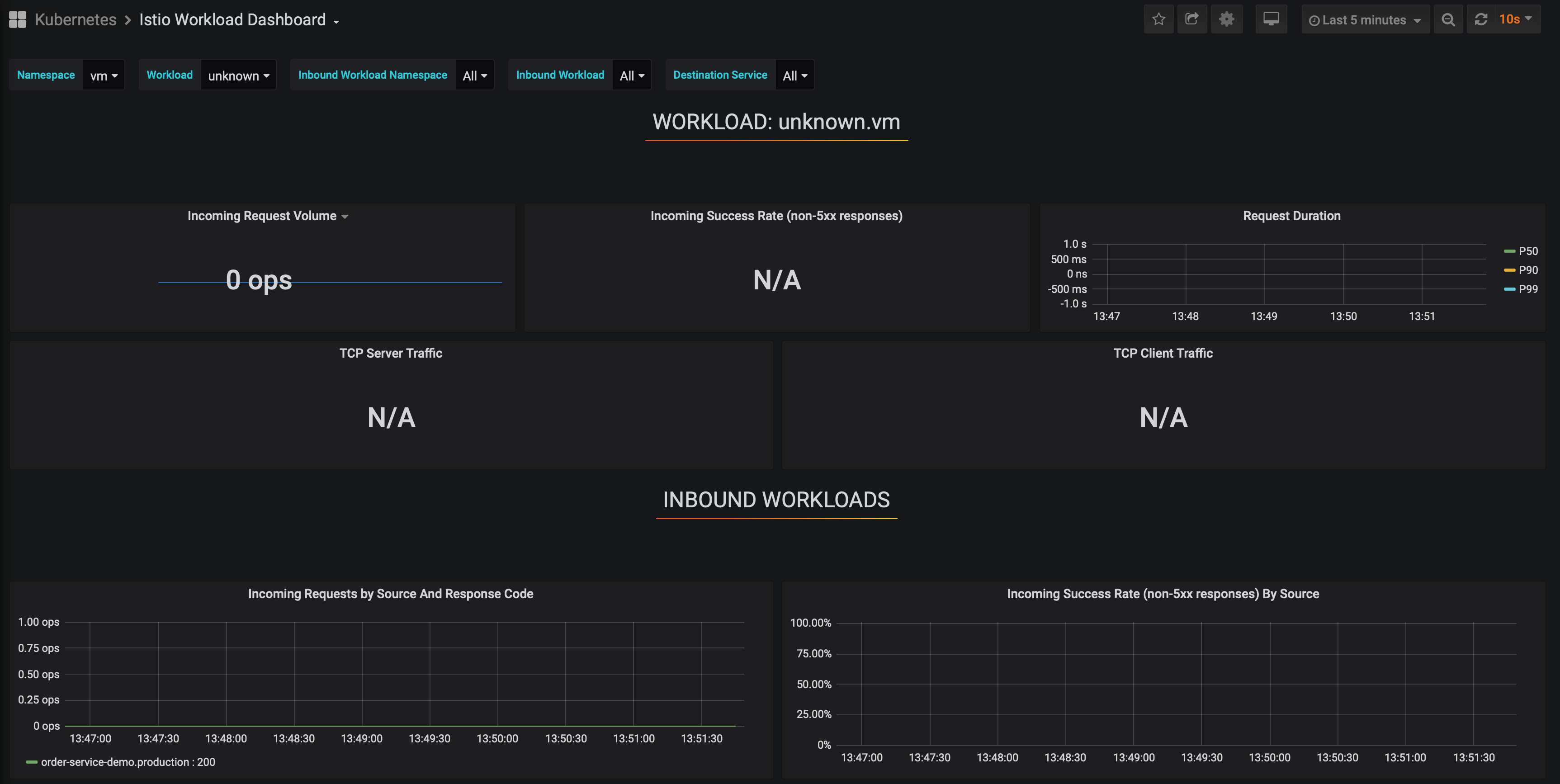

istio: Mesh Expansion: Istio Agent on VM displayed in metrics as "unknown"

The problem is that there is no way to identify the application that is added to the service mesh (VM), since it is displayed as unknown in the envoy proxy metrics

Affected product area (please put an X in all that apply)

[ ] Configuration Infrastructure [ ] Docs [ ] Installation [ ] Networking [ ] Performance and Scalability [ X] Policies and Telemetry [ ] Security [ ] Test and Release [ ] User Experience [ ] Developer Infrastructure

Affected features (please put an X in all that apply)

[ ] Multi Cluster [ X] Virtual Machine [ ] Multi Control Plane

Expected behavior

Steps to reproduce the bug 1. Install istio 1.6.5 from istioctl this my spec.yml

cat spec.yml

apiVersion: install.istio.io/v1alpha1

kind: IstioOperator

spec:

profile: default

values:

global:

defaultNodeSelector:

dedicated: infra

meshExpansion:

enabled: true

telemetry:

enabled: true

grafana:

enabled: false

tracing:

enabled: true

prometheus:

enabled: false

kiali:

enabled: true

2. Install Package Istio-proxy 1.6.5 on virtual machine with version OS 16.04.4 LTS (Xenial Xerus) 3. Configure cluster.env file for Istio-envoy

cat /var/lib/istio/envoy/cluster.env

ISTIO_CP_AUTH=MUTUAL_TLS

ISTIO_SERVICE_CIDR=172.16.206.0/23

ISTIO_INBOUND_PORTS=8080

ISTIO_NAMESPACE=vm

ISTIO_SERVICE=vmhttp

ISTIO_INBOUND_INTERCEPTION_MODE=REDIRECT

4. generate private cert and key, copy root ca, copy generating files to dirs

go run istio.io/istio/security/tools/generate_cert \

-client -host spiffee://cluster.local/vm/default --out-priv key.pem --out-cert cert-chain.pem -mode citadel

$ kubectl -n istio-system get cm istio-ca-root-cert -o jsonpath='{.data.root-cert\.pem}' > root-cert.pem

5. configure /etc/hosts for directly connection to Istiod control plane 6. systemctl start Istio

see logs…

cat /var/log/istio/istio.log

2020-07-17T06:32:26.963522Z info FLAG: --concurrency="0"

2020-07-17T06:32:26.963567Z info FLAG: --disableInternalTelemetry="false"

2020-07-17T06:32:26.963573Z info FLAG: --domain=""

2020-07-17T06:32:26.963576Z info FLAG: --help="false"

2020-07-17T06:32:26.963579Z info FLAG: --id=""

2020-07-17T06:32:26.963582Z info FLAG: --ip=""

2020-07-17T06:32:26.963585Z info FLAG: --log_as_json="false"

2020-07-17T06:32:26.963588Z info FLAG: --log_caller=""

2020-07-17T06:32:26.963591Z info FLAG: --log_output_level="default:info"

2020-07-17T06:32:26.963594Z info FLAG: --log_rotate=""

2020-07-17T06:32:26.963596Z info FLAG: --log_rotate_max_age="30"

2020-07-17T06:32:26.963599Z info FLAG: --log_rotate_max_backups="1000"

2020-07-17T06:32:26.963602Z info FLAG: --log_rotate_max_size="104857600"

2020-07-17T06:32:26.963605Z info FLAG: --log_stacktrace_level="default:none"

2020-07-17T06:32:26.963611Z info FLAG: --log_target="[stdout]"

2020-07-17T06:32:26.963617Z info FLAG: --meshConfig="./etc/istio/config/mesh"

2020-07-17T06:32:26.963619Z info FLAG: --mixerIdentity=""

2020-07-17T06:32:26.963622Z info FLAG: --outlierLogPath=""

2020-07-17T06:32:26.963624Z info FLAG: --pilotIdentity=""

2020-07-17T06:32:26.963627Z info FLAG: --proxyComponentLogLevel="misc:error"

2020-07-17T06:32:26.963630Z info FLAG: --proxyLogLevel="warning"

2020-07-17T06:32:26.963633Z info FLAG: --serviceCluster="istio-proxy"

2020-07-17T06:32:26.963636Z info FLAG: --serviceregistry="Kubernetes"

2020-07-17T06:32:26.963638Z info FLAG: --stsPort="0"

2020-07-17T06:32:26.963642Z info FLAG: --templateFile=""

2020-07-17T06:32:26.963645Z info FLAG: --tokenManagerPlugin="GoogleTokenExchange"

2020-07-17T06:32:26.963647Z info FLAG: --trust-domain=""

2020-07-17T06:32:26.963669Z info Version 1.6.5-f508fdd78eb0d3444e2bc2b3f36966d904c5db52-dirty-Modified

2020-07-17T06:32:26.963791Z info Obtained private IP [172.16.100.64]

2020-07-17T06:32:26.963809Z warn failed to read pod annotations: open ./etc/istio/pod/annotations: no such file or directory

2020-07-17T06:32:26.963840Z info Apply proxy config from env

serviceCluster: vmhttp

controlPlaneAuthPolicy: MUTUAL_TLS

discoveryAddress: istiod.istio-system.svc:15012

2020-07-17T06:32:26.964926Z info Effective config: binaryPath: /usr/local/bin/envoy

configPath: ./etc/istio/proxy

controlPlaneAuthPolicy: MUTUAL_TLS

discoveryAddress: istiod.istio-system.svc:15012

drainDuration: 45s

envoyAccessLogService: {}

envoyMetricsService: {}

parentShutdownDuration: 60s

proxyAdminPort: 15000

serviceCluster: istio-proxy

statNameLength: 189

statusPort: 15020

tracing:

zipkin:

address: zipkin.istio-system:9411

2020-07-17T06:32:26.964961Z info Proxy role: &model.Proxy{ClusterID:"", Type:"sidecar", IPAddresses:[]string{"172.16.100.64"}, ID:"atlas-nsk-dev2-php7-01.vm", Locality:(*envoy_api_v2_core.Locality)(nil), DNSDomain:"vm.svc.cluster.local", ConfigNamespace:"", Metadata:(*model.NodeMetadata)(nil), SidecarScope:(*model.SidecarScope)(nil), PrevSidecarScope:(*model.SidecarScope)(nil), MergedGateway:(*model.MergedGateway)(nil), ServiceInstances:[]*model.ServiceInstance(nil), IstioVersion:(*model.IstioVersion)(nil), ipv6Support:false, ipv4Support:false, GlobalUnicastIP:"", XdsResourceGenerator:model.XdsResourceGenerator(nil), Active:map[string]*model.WatchedResource(nil)}

2020-07-17T06:32:26.964965Z info JWT policy is third-party-jwt

2020-07-17T06:32:26.964986Z warn Using existing certificate /etc/certs

2020-07-17T06:32:26.964994Z info PilotSAN []string{"istiod.istio-system.svc"}

2020-07-17T06:32:26.964998Z info MixerSAN []string{"spiffe://cluster.local/ns/istio-system/sa/istio-mixer-service-account"}

2020-07-17T06:32:27.011964Z info serverOptions.CAEndpoint == istiod.istio-system.svc:15012

2020-07-17T06:32:27.011991Z info Using user-configured CA istiod.istio-system.svc:15012

2020-07-17T06:32:27.011995Z info istiod uses self-issued certificate

2020-07-17T06:32:27.012017Z info the CA cert of istiod is: -----BEGIN CERTIFICATE-----

MIIC3jCCAcagAwIBAgIRAPL91dGPftxQLtq/2sOn27IwDQYJKoZIhvcNAQELBQAw

GDEWMBQGA1UEChMNY2x1c3Rlci5sb2NhbDAeFw0yMDA3MTQwNjExNTNaFw0zMDA3

MTIwNjExNTNaMBgxFjAUBgNVBAoTDWNsdXN0ZXIubG9jYWwwggEiMA0GCSqGSIb3

DQEBAQUAA4IBDwAwggEKAoIBAQDlymV3cPzsTREOhnqGOumJORiYPeew/yVcuXWO

34ZrUkJO/qpYM7cGJqxR3Mi1RdfzjRnwBn3IMricrNONH3WwtG+OXjqZ+GkJTw8R

OBpx79tSQ3QAV3aK6V2Dkxipz6DWKv1Kksmmoycb3L4A/nXUCdG2cXghXzM7hNgN

M9K9E8rHIscRL2upz+jBLH5pMZ33hE4D89dpFa9A0eZVAvLz7OW4P3wcOpDGNrLC

jK1OWs9eK9dTW0V7zkxax2fR/EL5Lc7SMKeq/SLJMrzH5DFBnhoW+pLhl1E9ciPS

Er30ml/8TxKqkW27o+t2ZNla/8wUeWAMIvc5hmkplbhcTJCjAgMBAAGjIzAhMA4G

A1UdDwEB/wQEAwICBDAPBgNVHRMBAf8EBTADAQH/MA0GCSqGSIb3DQEBCwUAA4IB

AQAAxA1pQTcU4jKDRNubT0jMHIdLMOSeejaVAHkRmv+1pmKx4c85VV7cFTSBzaBk

VEIeQfpJTVDmUBl7OIPApFH0AKZAogzNR0w5mE5Nip0bny9gODivOXzpED3gf+5S

CmeUgiBfgotxp+74GYOoelgINpO9KP0aHwdR9wMSjvo3Z3RoGyyNvQHoGFkKaRg3

HSijy4UuoZIvTSX7NClDwb8i8tK78h2iOHxSZBGT9feq9D2XWPLw9aOW4kqqd/8I

P0boMl2TIPzc2goDt3ZaWCsDCqisZuy1YDXTbY14XQA/hWNfvqbF0xr85DzXECFh

f/t8Aa09JOuHirbgQAhHrAGX

-----END CERTIFICATE-----

2020-07-17T06:32:27.012121Z info parsed scheme: ""

2020-07-17T06:32:27.012130Z info scheme "" not registered, fallback to default scheme

2020-07-17T06:32:27.012170Z info ccResolverWrapper: sending update to cc: {[{istiod.istio-system.svc:15012 <nil> 0 <nil>}] <nil> <nil>}

2020-07-17T06:32:27.012184Z info ClientConn switching balancer to "pick_first"

2020-07-17T06:32:27.012188Z info Channel switches to new LB policy "pick_first"

2020-07-17T06:32:27.012217Z info Subchannel Connectivity change to CONNECTING

2020-07-17T06:32:27.012315Z info Subchannel picks a new address "istiod.istio-system.svc:15012" to connect

2020-07-17T06:32:27.012382Z info sds SDS gRPC server for workload UDS starts, listening on "./etc/istio/proxy/SDS"

2020-07-17T06:32:27.012387Z info pickfirstBalancer: HandleSubConnStateChange: 0xc000dc7500, {CONNECTING <nil>}

2020-07-17T06:32:27.012434Z info Starting proxy agent

2020-07-17T06:32:27.012442Z info Channel Connectivity change to CONNECTING

2020-07-17T06:32:27.012468Z info Received new config, creating new Envoy epoch 0

2020-07-17T06:32:27.012467Z info sds Start SDS grpc server

2020-07-17T06:32:27.012526Z info Epoch 0 starting

2020-07-17T06:32:27.012519Z info Opening status port 15020

2020-07-17T06:32:27.024242Z info Subchannel Connectivity change to READY

2020-07-17T06:32:27.024273Z info pickfirstBalancer: HandleSubConnStateChange: 0xc000dc7500, {READY <nil>}

2020-07-17T06:32:27.024279Z info Channel Connectivity change to READY

2020-07-17T06:32:27.104683Z warn failed to read pod labels: open ./etc/istio/pod/labels: no such file or directory

2020-07-17T06:32:27.105451Z info Envoy command: [-c etc/istio/proxy/envoy-rev0.json --restart-epoch 0 --drain-time-s 45 --parent-shutdown-time-s 60 --service-cluster istio-proxy --service-node sidecar~172.16.100.64~atlas-nsk-dev2-php7-01.vm~vm.svc.cluster.local --max-obj-name-len 189 --local-address-ip-version v4 --log-format %Y-%m-%dT%T.%fZ %l envoy %n %v -l warning --component-log-level misc:error]

2020-07-17T06:32:27.178965Z info sds resource:default new connection

2020-07-17T06:32:27.179012Z info sds Skipping waiting for ingress gateway secret

2020-07-17T06:32:27.179229Z info cache adding watcher for file ./etc/certs/cert-chain.pem

2020-07-17T06:32:27.179292Z info cache GenerateSecret from file default

2020-07-17T06:32:27.179630Z info sds resource:default pushed key/cert pair to proxy

2020-07-17T06:32:27.714243Z info sds resource:ROOTCA new connection

2020-07-17T06:32:27.714309Z info sds Skipping waiting for ingress gateway secret

2020-07-17T06:32:27.714504Z info cache adding watcher for file ./etc/certs/root-cert.pem

2020-07-17T06:32:27.714540Z info cache GenerateSecret from file ROOTCA

2020-07-17T06:32:27.714833Z info sds resource:ROOTCA pushed root cert to proxy

cat /var/log/istio/istio.err.log

2020-07-17T13:32:27.170801Z warning envoy config [bazel-out/k8-opt/bin/external/envoy/source/common/config/_virtual_includes/grpc_stream_lib/common/config/grpc_stream.h:92] StreamAggregatedResources gRPC config stream closed: 14, no healthy upstream

2020-07-17T13:32:27.170887Z warning envoy config [bazel-out/k8-opt/bin/external/envoy/source/common/config/_virtual_includes/grpc_stream_lib/common/config/grpc_stream.h:54] Unable to establish new stream

2020-07-17T13:32:27.177798Z warning envoy main [external/envoy/source/server/server.cc:475] there is no configured limit to the number of allowed active connections. Set a limit via the runtime key overload.global_downstream_max_connections

2020-07-17T13:32:27.861824Z warning envoy filter [src/envoy/http/authn/http_filter_factory.cc:83] mTLS PERMISSIVE mode is used, connection can be either plaintext or TLS, and client cert can be omitted. Please consider to upgrade to mTLS STRICT mode for more secure configuration that only allows TLS connection with client cert. See https://istio.io/docs/tasks/security/mtls-migration/

2020-07-17T13:32:27.862834Z warning envoy filter [src/envoy/http/authn/http_filter_factory.cc:83] mTLS PERMISSIVE mode is used, connection can be either plaintext or TLS, and client cert can be omitted. Please consider to upgrade to mTLS STRICT mode for more secure configuration that only allows TLS connection with client cert. See https://istio.io/docs/tasks/security/mtls-migration/

and logs in Istiod

kubectl logs -n=istio-system istiod-585c5d45f5-59xtj

2020-07-17T06:32:27.653966Z warn getProxyServiceInstancesFromMetadata for atlas-nsk-dev2-php7-01.vm failed: proxy is in cluster , but controller is for cluster Kubernetes

2020-07-17T06:32:27.654027Z info ads ADS:CDS: REQ sidecar~172.16.100.64~atlas-nsk-dev2-php7-01.vm~vm.svc.cluster.local-909 version:

2020-07-17T06:32:27.655772Z info ads CDS: PUSH for node:atlas-nsk-dev2-php7-01.vm clusters:53 services:603 version:2020-07-17T06:29:03Z/131

2020-07-17T06:32:27.705679Z info ads EDS: PUSH for node:atlas-nsk-dev2-php7-01.vm clusters:46 endpoints:94 empty:8

2020-07-17T06:32:27.751724Z info ads LDS: PUSH for node:atlas-nsk-dev2-php7-01.vm listeners:55

2020-07-17T06:32:27.865341Z info ads RDS: PUSH for node:atlas-nsk-dev2-php7-01.vm routes:28

2020-07-17T06:34:19.978369Z info transport: loopyWriter.run returning. connection error: desc = "transport is closing"

7. Envoy-proxy from VM successful connected to Istio control plane.

8. Then we create service mesh external service

istioctl experimental add-to-mesh external-service vmhttp ${VM_IP} http:8080 -n vm

9. Checking connection from VM to POD in service mesh

root@atlas-nsk-dev2-php7-01:~# curl -v http://order-service-demo.production.svc.cluster.local/actuator/health

* Trying 172.16.206.153...

* Connected to order-service-demo.production.svc.cluster.local (172.16.206.153) port 80 (#0)

> GET /actuator/health HTTP/1.1

> Host: order-service-demo.production.svc.cluster.local

> User-Agent: curl/7.47.0

> Accept: */*

>

< HTTP/1.1 200 OK

< content-type: application/vnd.spring-boot.actuator.v3+json

< content-length: 15

< x-envoy-upstream-service-time: 3

< date: Fri, 17 Jul 2020 06:40:08 GMT

< server: istio-envoy

< x-envoy-decorator-operation: order-service-demo.production.svc.cluster.local:80/*

<

* Connection #0 to host order-service-demo.production.svc.cluster.local left intact

{"status":"UP"}

10. Checking connection from POD to VM

/app # curl -v vmhttp.vm.svc.cluster.local:8080

* Trying 172.16.206.40:8080...

* Connected to vmhttp.vm.svc.cluster.local (172.16.206.40) port 8080 (#0)

> GET / HTTP/1.1

> Host: vmhttp.vm.svc.cluster.local:8080

> User-Agent: curl/7.69.1

> Accept: */*

>

* Mark bundle as not supporting multiuse

< HTTP/1.1 200 OK

< server: envoy

< date: Fri, 17 Jul 2020 06:42:14 GMT

< content-type: text/html; charset=UTF-8

< content-length: 812

< x-envoy-upstream-service-time: 28

<

<!DOCTYPE html PUBLIC "-//W3C//DTD HTML 3.2 Final//EN"><html>

<title>Directory listing for /</title>

<body>

<h2>Directory listing for /</h2>

<hr>

<ul>

<li><a href=".ansible/">.ansible/</a>

<li><a href=".aptitude/">.aptitude/</a>

<li><a href=".bash_history">.bash_history</a>

<li><a href=".bashrc">.bashrc</a>

<li><a href=".cache/">.cache/</a>

<li><a href=".composer/">.composer/</a>

<li><a href=".config/">.config/</a>

<li><a href=".lesshst">.lesshst</a>

<li><a href=".local/">.local/</a>

<li><a href=".nano/">.nano/</a>

<li><a href=".profile">.profile</a>

<li><a href=".rnd">.rnd</a>

<li><a href=".selected_editor">.selected_editor</a>

<li><a href=".ssh/">.ssh/</a>

<li><a href=".viminfo">.viminfo</a>

<li><a href="etc/">etc/</a>

<li><a href="istio-sidecar.deb">istio-sidecar.deb</a>

</ul>

<hr>

</body>

</html>

* Connection #0 to host vmhttp.vm.svc.cluster.local left intact

11. then I look at the metrics on the envoy agent on VM and see that the source app is unknown

istio_requests_total{response_code=“200”,reporter=“destination”,source_workload=“order-service-demo”,source_workload_namespace=“production”,source_principal=“unknown”,source_app=“order-service-demo”,source_version=“1.0-202027-2”,destination_workload=“unknown”,destination_workload_namespace=“vm”,destination_principal=**“unknown”,destination_app=“unknown”,destination_version=“unknown”,**destination_service=“vmhttp.vm.svc.cluster.local”,destination_service_name=“vmhttp”,destination_service_namespace=“vm”,request_protocol=“http”,response_flags=“-”,grpc_response_status=“”,connection_security_policy=“none”,source_canonical_service=“order-service-demo”,destination_canonical_service=“unknown”,source_canonical_revision=“1.0-202027-2”,destination_canonical_revision=“latest”} 1

Workload on VM is unknown

My config:

Version (include the output of istioctl version --remote and kubectl version and helm version if you used Helm)

❯ ./istioctl version

Open http://localhost:8000 for authentication

client version: 1.6.5

control plane version: 1.6.5

data plane version: 1.6.5 (5 proxies), 1.6.0 (1 proxies)

❯ kubectl version

Client Version: version.Info{Major:"1", Minor:"16", GitVersion:"v1.16.4", GitCommit:"224be7bdce5a9dd0c2fd0d46b83865648e2fe0ba", GitTreeState:"clean", BuildDate:"2019-12-11T12:47:40Z", GoVersion:"go1.12.12", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"16", GitVersion:"v1.16.6", GitCommit:"72c30166b2105cd7d3350f2c28a219e6abcd79eb", GitTreeState:"clean", BuildDate:"2020-01-18T23:23:21Z", GoVersion:"go1.13.5", Compiler:"gc", Platform:"linux/amd64"}

How was Istio installed?

istio was installed via native istioctl utility here is an example of my values.yml meshExpansion this enabled.

❯ cat spec.yml apiVersion: install.istio.io/v1alpha1 kind: IstioOperator spec: profile: default values: global: defaultNodeSelector: dedicated: infra meshExpansion: enabled: true telemetry: enabled: true grafana: enabled: false tracing: enabled: true prometheus: enabled: false kiali: enabled: true

Environment where bug was observed (cloud vendor, OS, etc)

Additionally, please consider attaching a cluster state archive by attaching the dump file to this issue.

About this issue

- Original URL

- State: closed

- Created 4 years ago

- Reactions: 1

- Comments: 15 (5 by maintainers)

Thanks!!!

I added this parameters in /usr/local/bin/istio-start.sh … exec su -s /bin/bash -c “INSTANCE_IP=${ISTIO_SVC_IP} POD_NAME=${POD_NAME} ISTIO_META_WORKLOAD_NAME=vmhttp ISTIO_METAJSON_LABELS={“app”:“vmhttp”} POD_NAMESPACE=${NS} exec ${ISTIO_BIN_BASE}/pilot-agent proxy ${ISTIO_AGENT_FLAGS_ARRAY[*]} 2> ${ISTIO_LOG_DIR}/istio.err.log > ${ISTIO_LOG_DIR}/istio.log” ${EXEC_USER} …

it’s working!!!