istio: Istio Ingress Gateway (Envoy) memory leak with bi-directional GRPC Streams

Bug description Istio Ingress Gateway (more specifically Envoy Proxy) memory keeps increasing when some Bi-Directional GRPC streams (HTTP2) are opened.

My test case is using my own load-testing tool, which open one GRPC bi-directional Stream per simulated client, then send one message every 10s. For this test I started 2500 clients by batches of 100 every 20s.

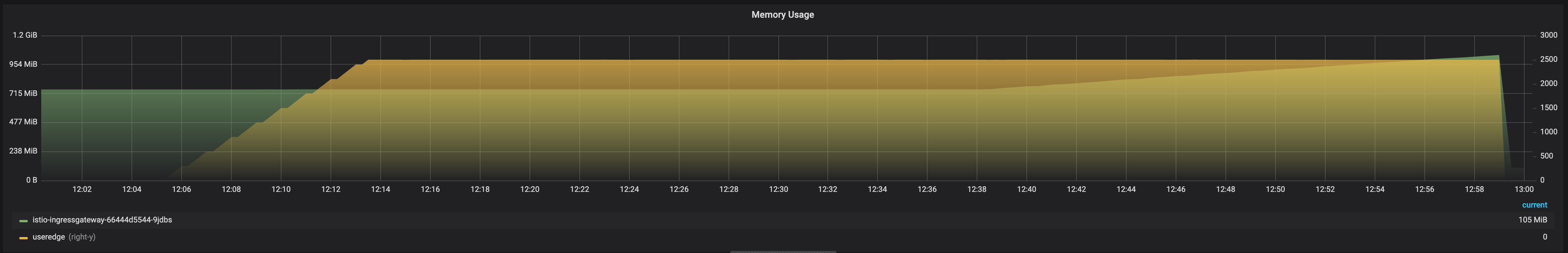

In this graph :

- purple : client connexions count

- green : Istio Ingress Gateway’s Pod memory usage

Looking at the Envoy’s stats:

server.total_connections: 2500 is stableserver.memory_allocated: 175166280 keeps increasingserver.memory_heap_size: 191889408 keeps increasing

If I start only 500 clients, the memory keeps increasing but at a slower pace. Also, if I stop the clients before the OOM kill, the proxy My conclusion is that Envoy leaks some fixed memory size per connexion.

I had the issue on Istio 1.3.3 before upgrading and I also have it on another cluster running 1.2.2

On the 1.2.2 cluster, the Proxy was using 720Mb or RAM before I started my test. I think the memory was re-used until it went above and start grabbing more RAM. Until it was OOM Killed :

This is an early bug opening and I expect to grab more metrics from Envoy Proxy. I also think I’ll open an issue on the Envoy’s side.

Expected behaviour the GW memory usage should increase when a new connexion is made then stay stable

Steps to reproduce the bug Open HTTP/2 connexions through the GW.

Version (include the output of istioctl version --remote and kubectl version)

istioctl version --remote

client version: 1.3.0

control plane version: 1.3.4

kubectl version

Client Version: version.Info{Major:"1", Minor:"15", GitVersion:"v1.15.3", GitCommit:"2d3c76f9091b6bec110a5e63777c332469e0cba2", GitTreeState:"clean", BuildDate:"2019-08-19T12:36:28Z", GoVersion:"go1.12.9", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"14", GitVersion:"v1.14.6", GitCommit:"96fac5cd13a5dc064f7d9f4f23030a6aeface6cc", GitTreeState:"clean", BuildDate:"2019-08-19T11:05:16Z", GoVersion:"go1.12.9", Compiler:"gc", Platform:"linux/amd64"}

How was Istio installed? from Helm templates

Environment where bug was observed (cloud vendor, OS, etc) Azure AKS

About this issue

- Original URL

- State: closed

- Created 5 years ago

- Comments: 29 (19 by maintainers)

@amir-hadi Can you provide more information about service

X? Is serviceXexposed on the ingress as well? Is theprocessing-servicehittingXvia the ingress or intra-cluster? Would setting maxRetries to0on the destination rule fix the leak fromprocessing-service-->XWe are running into this on 1.4.3.